Katherine Cross, who conducts research on online harassment at the University of Washington, says that if virtual reality is immersive and real, then toxic behavior that occurs in that environment is also real. “Ultimately, the nature of virtual reality spaces is designed to trick the user into believing that they are physically in a particular space and that all physical action is taking place in a 3D environment,” she says. “That is one of the reasons why emotional responses can be stronger in this room and why VR triggers the same internal nervous system and the same psychological responses.”

That was the case with the woman who was groped on Horizon Worlds. According to The Verge, her post read: “Sexual harassment isn’t a joke on the regular internet, but being in VR adds another layer that makes the event more intense. Not only was I groped last night, but there were other people who encouraged this behavior, which made me feel isolated in the plaza [the virtual environment’s central gathering space]. “

Sexual assault and harassment in virtual worlds is not new, nor is it realistic to expect a world in which these problems will completely go away. As long as there are people who hide behind their computer screens to evade moral responsibility, they will continue to perform.

Perhaps the real problem has to do with the perception that while playing a game or participating in a virtual world there is something that Stanton calls a “developer-player contract”. “As a player, I agree to be able to do what I want in the developer world according to its rules,” he says. “But once this contract is broken and I feel uncomfortable, the company’s obligation is to get the player back to where they want to be and get comfortable again.”

The question is: who is responsible for making users comfortable? For example, Meta says it gives users access to tools to protect themselves and effectively shifts the responsibility onto them.

“We want everyone in Horizon Worlds to have a positive experience with easy-to-find security tools – and it’s never the user’s fault if they don’t use all of the features we offer,” said Meta spokeswoman Kristina Milian. “We will continue to improve our user interface and better understand how people use our tools so that users can report things easily and reliably. Our goal is to make Horizon Worlds safe, and we are committed to that. “

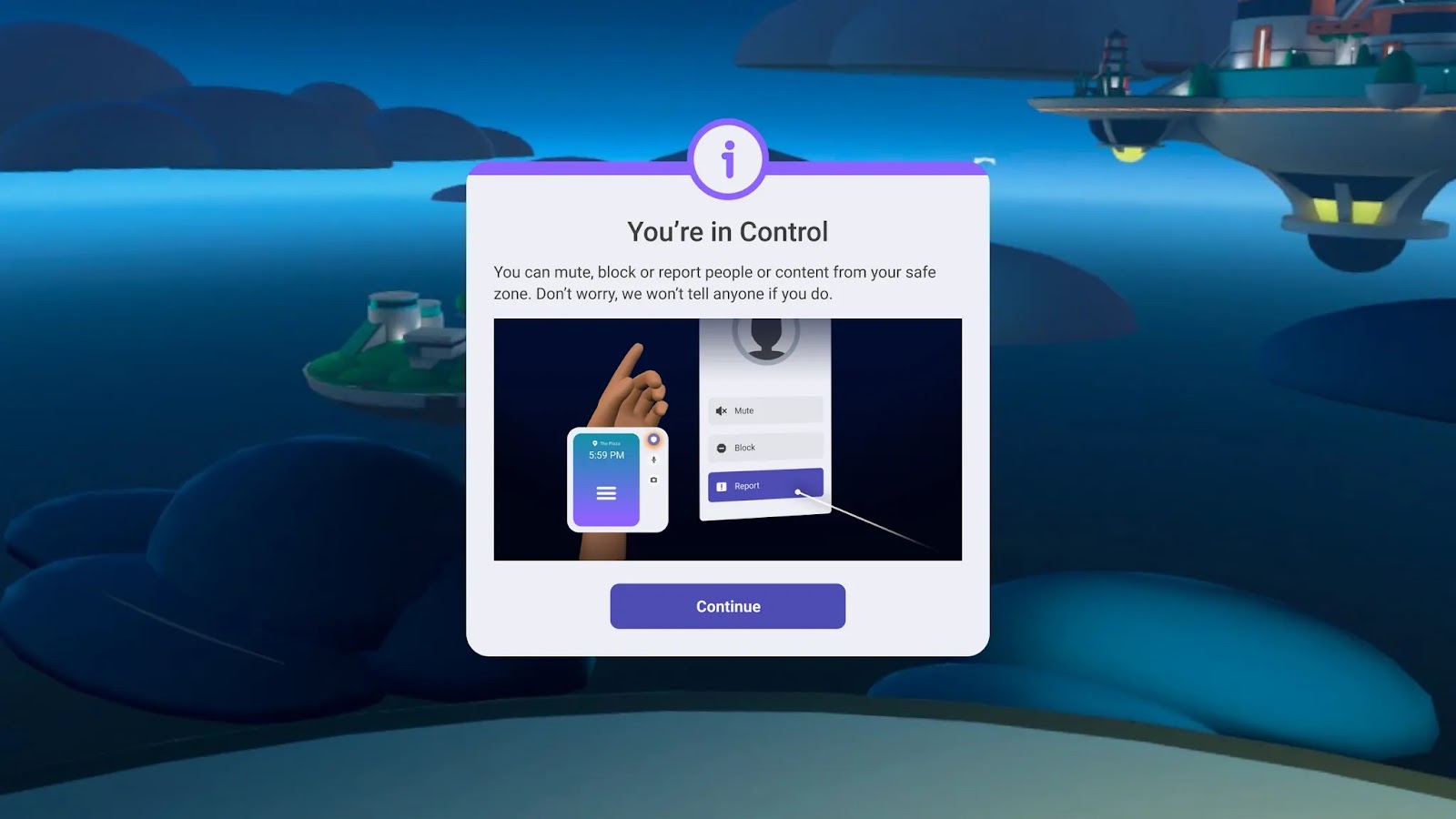

Milian said that prior to joining Horizon Worlds, users must go through an onboarding process that will teach them how to start the Safe Zone. She also said that regular reminders are loaded onto screens and posters in Horizon Worlds.

Screenshots of the Safe Zone user interface courtesy of Meta

Screenshots of the Safe Zone user interface courtesy of Meta

But the fact that the meta-grapping victim either didn’t think about using the safe zone or couldn’t access it is exactly the problem, says Cross. “The structural question is the big issue for me,” she says. “Generally, when companies tackle online abuse, their solution is to outsource it to the user and say, ‘This is where we give you the power to take care of yourself.'”

And that’s unfair and doesn’t work. Security should be simple and accessible, and there are many ideas to make this happen. For Stanton, all that would be needed was some kind of universal signal in virtual reality – perhaps Quivr’s V-gesture – that could tell the moderators that something was wrong. Fox wonders if automatic personal distancing would help unless two people agreed to be closer to each other. And Cross thinks it makes sense to explicitly set up norms in training units that correspond to those of normal life: “In the real world, you wouldn’t touch anyone indiscriminately, and that should be transferred to the virtual world.”

Until we find out whose job it is to protect users, an important step towards a more secure virtual world is to discipline attackers, who often get away with it and remain authorized to participate online even after their behavior is discovered. “We need deterrents,” says Fox. That means making sure that bad actors are found and suspended or banned. (Milian said Meta “[doesn’t] Provide details of individual cases “when asked what happened to the suspected claw.)

Stanton regrets not having pushed for the industry-wide introduction of the power gesture and not speaking about Belamire’s groping incident. “That was a missed opportunity,” he says. “We could have avoided this incident at Meta.”

If anything is clear, it is this: There is no body responsible for the rights and safety of those who participate anywhere online, let alone in virtual worlds. Until something changes, the Metaverse will remain a dangerous, problematic space.